Some of you said that what I suggested was not that trivial/easy for you to do by yourselves and asked if there was an easier way to achieve such a score. The short answer is yes.

In this article, I am going to present a shortcut on how to get a 100% performance rating (for the theme mentioned in the previous article) without writing any additional code — just by adding a few parameters to .htaccess.

Please bear in mind that if you were never involved with the internals of hosting, some of this may still be jargon to you. Therefore, it may be best to pay anything between $100–$1000 for someone like myself to do this tuning for you. If you think that this is a lot of money for the implementation time, just think that such rates are justified from the fact that somehow you have to pay for the years (if not decades) of work that it took us to reach this level of expertise. But if you are money conscious, I think that you should save your money by trying it out first.

It is worth mentioning however that one of my clients femme-fatale.gr has managed to triple its income within a year by just improving the speed of the user experience. Triple income with just 25% increase of absolute unique visitors.

Why I was not happy with scoring “just” 98%

If all your life as an engineer you have been involved with performance, even a 98% is not good enough. You keep asking yourself “what do I have to do to get 100%?”.

I am not saying that you are unprofessional if you settle for less — after all, engineering is the art and craft of trade-offs — I am just explaining myself as to why I wanted to bring this to the limit.

Mind you, in the period between 1996 and 2005 I was an active beta tester of Microsoft MDAC (the interface that was used to connect the web servers with databases). There we were testing our speed in terms of CPU clock cycles. Although nowadays, we take performance for granted, back in the day our OSes were not as good or stable as they are now, had poor memory handling, the drivers were rather premature, but we could anticipate that speed and the reliability of the connection between the web server and the database will be one of the most crucial factors if one wanted to remain competitive in this… race.

This approach is not limited to website performance but even for the performance of my personal computer; this obsession led to the creation of one of the fastest kits in the UK regarding memory bandwidth (>80GB/s) and hard disk throughput(>13GB/s throughput).

What does it take to boot Windows 10 in 5 seconds?

It took me three stories with over three and half thousand viewers, together with many commentators and new friends to…

So instead of… congratulating myself for getting 1% better than the theme maker’s installation, I was furious that I missed that 2% from perfection. As far as I was concerned all my tuning work, was wasted. Redemption could have only come with that 100% 🙂

Why in the previous test I got “just” 98%

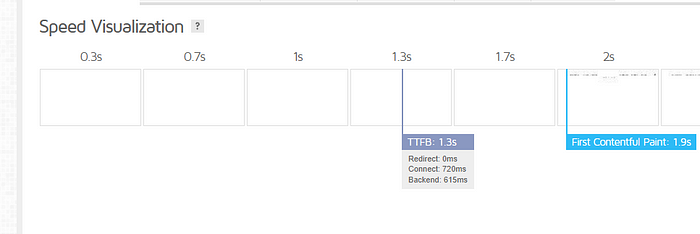

I dug a little bit deeper and realized that a likely key factor preventing perfection was the unreliable host, that was offering, at times, a TTDB of over 1 second. This is the time that it takes for the host, to wave hello, to the request that it got. Kind of… “OK client, know that I got the request, I am going to start serving it”.

I noticed that the TTFB of my then host was ranging between 0.3 to 1.5s!

So, I thought that it is best to try to explore what will happen if my application was hosted in a host with “perfect” connectivity.

Step 1/2: Making use of the AWS benchmark connectivity

Even if you don’t use AWS for all your applications due to cost, you have to give it to the guys at Amazon that their connectivity is as good as it gets. Over the years, they have become the… de facto backbone of the Internet.

So, I set up a thin instance in Lightsail to see what kind of result I get when I am riding “on the backbone”. When I say thin, I mean it: I picked their minimum configuration of 1 Virtual CPU and 2GB RAM, set up the default WordPress theme (not the complex TagDiv theme), and noted the TTFB.

The connect was between 5 to 40ms at worst! Then I had to explore why the backend was taking so much time.

The next step was to set up a second kit with 2 Virtual CPUs and 8GB RAM to see if the 1 Virtual CPU/2GB kit was inadequate:

To my, pleasant, surprise… the two kits were having identical performance! So our (last) bottleneck was down to the configuration of the server.

With the connectivity/CPU/RAM factors out of the equation, I deleted the expensive instance, and started the actual tuning work on the thin machine (that comes for free for the first three months):

So, I had to install the actual theme, with the data, as well as all cache, CSS/JS optimization plugins of the application where I had scored 98%. Well, I used the All-in-one migration tool to get the replica of the site. This had a negative impact on the performance of the instance, thus it was, just as I expected, a matter of tuning the server to be able to efficiently host the particular theme.

Step 2/2: Finding a decent hosting provider with good TTFB

By searching various geek forums for the hosting companies that have the best TTFB in Europe, I came across — an unknown to me at the time provider — SiteGround.

They started in Bulgaria but they have a presence all over Europe. Everybody was talking about the great, friendly service that they are offering couple with best in class performance.

It was Black Friday, they had an offer for their shared hosting package, so I decided to invest $160 for shared hosting, to check the connectivity for myself.

Indeed it is awesome. The connectivity figures are as good as Amazon’s! Amazing!

Then, I installed the full backup and started to measure with the Cloudflare CDN out of the equation (aka no CDN). In my original host, I was getting scores for the particular application with Google Ads, Tags, etc. of about 70–75. In here I was getting about 85–90! But still, there was this issue with the backend.

Then I started fiddling with the wp-config.php and .htaccess to meet the specifications of tagDiv. For example, in my wp-config.php I added:define( ‘WP_MEMORY_LIMIT’, ‘2048M’ );

tagDiv’s minimum requirement is 256MB — WordPress’s default is usually 40MB.

And the performance went consistently higher than 90.

Then I added in the .htaccess the following:##Begin TagDiv Optimization Paramsphp_value max_input_vars 6000

php_value max_execution_time 500

php_value post_max_size 100M

php_value upload_max_filesize 100Mphp_value suhosin.post.max_vars 6000

php_value suhosin.request.max_vars 6000<IfModule mod_substitute.c>

SubstituteMaxLineLength 10M

</IfModule>##End TagDivs Optimization Params

It was getting better but still, something was not quite right to me.

I talked with their support and I was fortunate to be redirected (at 1 AM this is) with one of the top hosting support engineers I have come across, Mariyan Evropov, who was looking after me as if the company was his own.

As I was playing with AWS and the full theme at SiteGround, Mariyan set up for me a default WordPress installation in my hosting package, that scored 100%!

We then started to figure out that maybe there were limitations in the hosting package. I told him that I had increased the memory limit to 2048MB but he told me that in the hosting package, this is restricted to 750MB.

Since it is a company policy not to increase in the shared hosting this value to over 750MB, he sold me the Cloud package, which gives us the flexibility to tune it as we like.

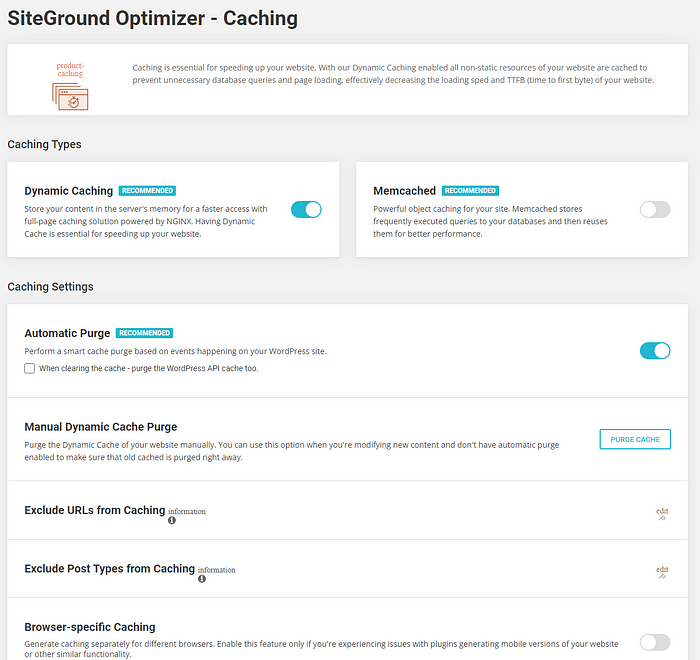

So we started working on the Cloud Package. We stripped the application from all external plugins for cache and optimization and used SiteGround’s Optimizer that contains everything in one place:

With those settings, even forfeiting the local serving of the fonts and using Google fonts instead, we got 100% in our complex — reference — theme.

One application which is based on the same theme that serves over 6000 articles and 10,000 images (with 120000 thumbnails), with Google Analytics, Google Adsense and Google Search connected via Site Kit now gets the following ratings:

Overall Performance:

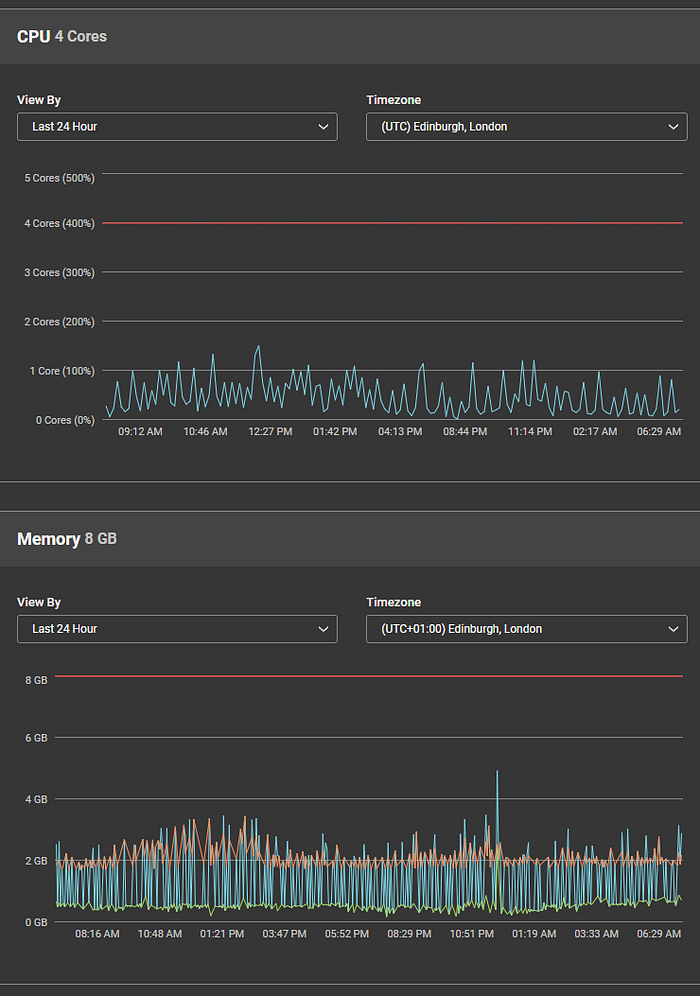

Now let’s look at the performance of the kit:

In Conclusion:

I hope this concludes the topic about performance optimization. As said in the first part, one cannot overestimate the significance of the speed of the delivery of your content to the success of your site.

A footnote:

One friend who happened to see in his Linkedin timeline two of my latest posts got worried about my career and asked how I combine my involvement with “mere” website tuning as well as more serious (to him I guess) stuff like recommendations on the metadata policy for the US DoD through my involvement with Linux Foundations AI & Big Data Egeria initiative.

Both deals with speed and better service, plus require some -rather extensive- experience. Besides, I would rather have a good driver who knows the detailed mechanics of his car, who can fix it, etc., instead of a driver who used to have this knowledge but is currently unaware of important aspects of its mechanics.

Sadly, we have these preconceptions as if the website development should be left to poorly trained, inexperienced young people and that seniors should just be managers – bs*ing their way into retirement- and be ashamed when they perform some productive work.

One of my heroes at Ford opted to remain an Engineering Supervisor (at its pioneering development Centre (Ford Alpha)) then become an Executive Engineer, so that he could continue doing what he enjoyed — state-of-the-art engineering — just like Henry Ford himself. Another, the guy who in 1995 introduced me to the world of traditional media (and urged me to join their digital divisions), was coding until he died aged 80. Both loved their work. The same applies to me. I am just blessed to be doing what I used to like and still find interesting.

So, thanks for the interest but, it is a matter of opinion not of coincidence, or an imposed need. I do not know of anyone who gets 100% into any kind of exam/competition by doing while thinking what the others think is good for him/her to be thinking and doing.